Blog

Expert insight on the latest trends, solutions and best practices in logistics and supply chain

AI & Connected Ecosystem

AI’s impact on transport: A 2026 Pulse Report Q&A with Trimble’s Michael Kornhauser

DIGITAL TRANSFORMATION

New white paper from Trimble and FreightWaves explores logistics tech challenges

DIGITAL TRANSFORMATION

A new look, the same trusted partner: Welcome to Trimble's new digital home for transportation and logistics

DIGITAL TRANSFORMATION

Transportation in 2026: From experimentation to acceleration

TRANSPORTATION MANAGEMENT

Why Private Fleets Can't Afford to Ignore Optimization Software

DIGITAL TRANSFORMATION

SIMA and Trimble: Driving smarter logistics together

TRANSPORTATION MANAGEMENT

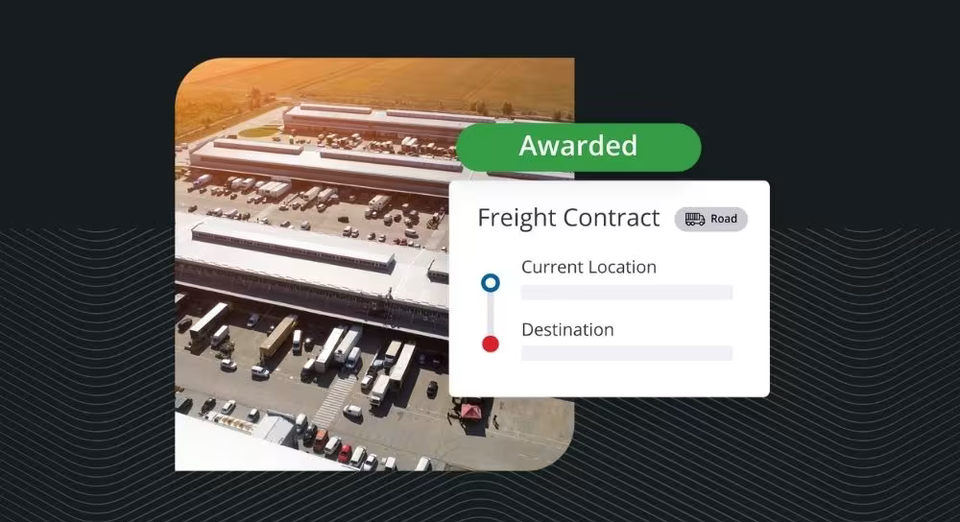

Trimble Freight Marketplace launches in North America with Procter & Gamble as first shipper customer

DIGITAL TRANSFORMATION

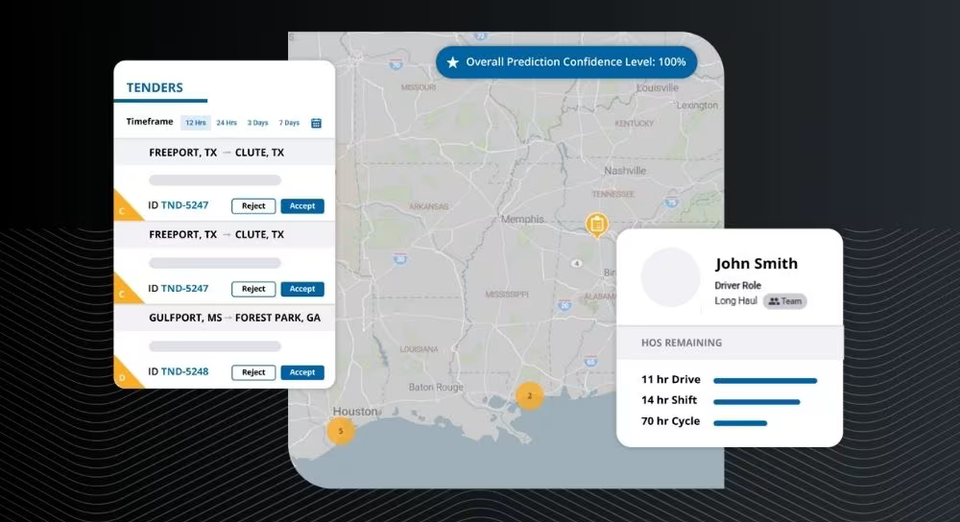

Trimble introduces AI-powered innovations at 2025 Trimble Insight Tech Conference

DIGITAL TRANSFORMATION

AI-powered next-gen Trimble TMS debuts at 2025 Trimble Insight Tech Conference

DIGITAL TRANSFORMATION

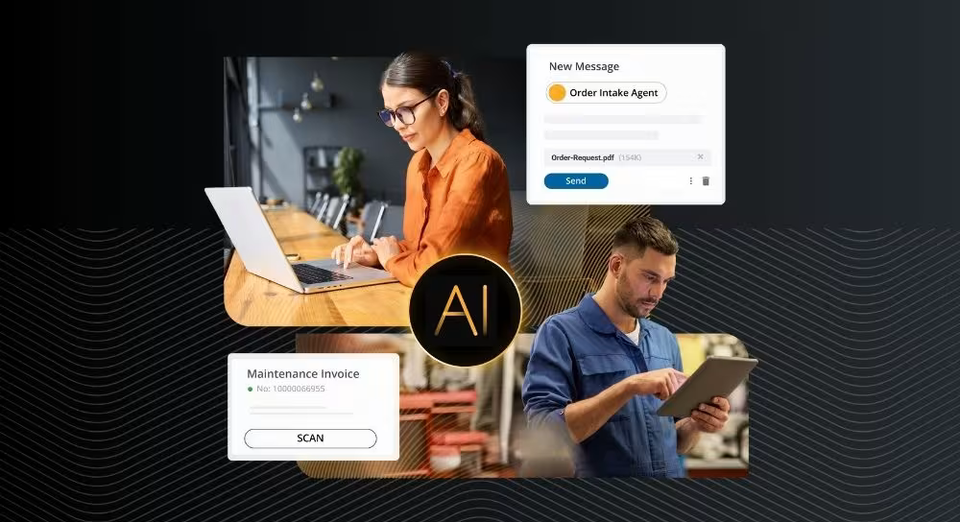

Trimble announces new AI agents and workflows to automate transportation operations

DIGITAL TRANSFORMATION

Trimble expands connected solution integrations to address transportation industry challenges

DIGITAL TRANSFORMATION